(This is a criticism of the data compression in favor of the Naive Bayes method.) (2002) Extended comment on "Language Trees and Zipping". Technical Report MCCS 94-273, New Mexico State University, 1994. (1994) "Statistical Identification of Language". IEEE Transactions on Information Theory 51(4), April 2005, 1523-1545. Proceedings of SDAIR-94, 3rd Annual Symposium on Document Analysis and Information Retrieval (1994). Physical Review Letters, 88:4 (2002), Complexity theory.

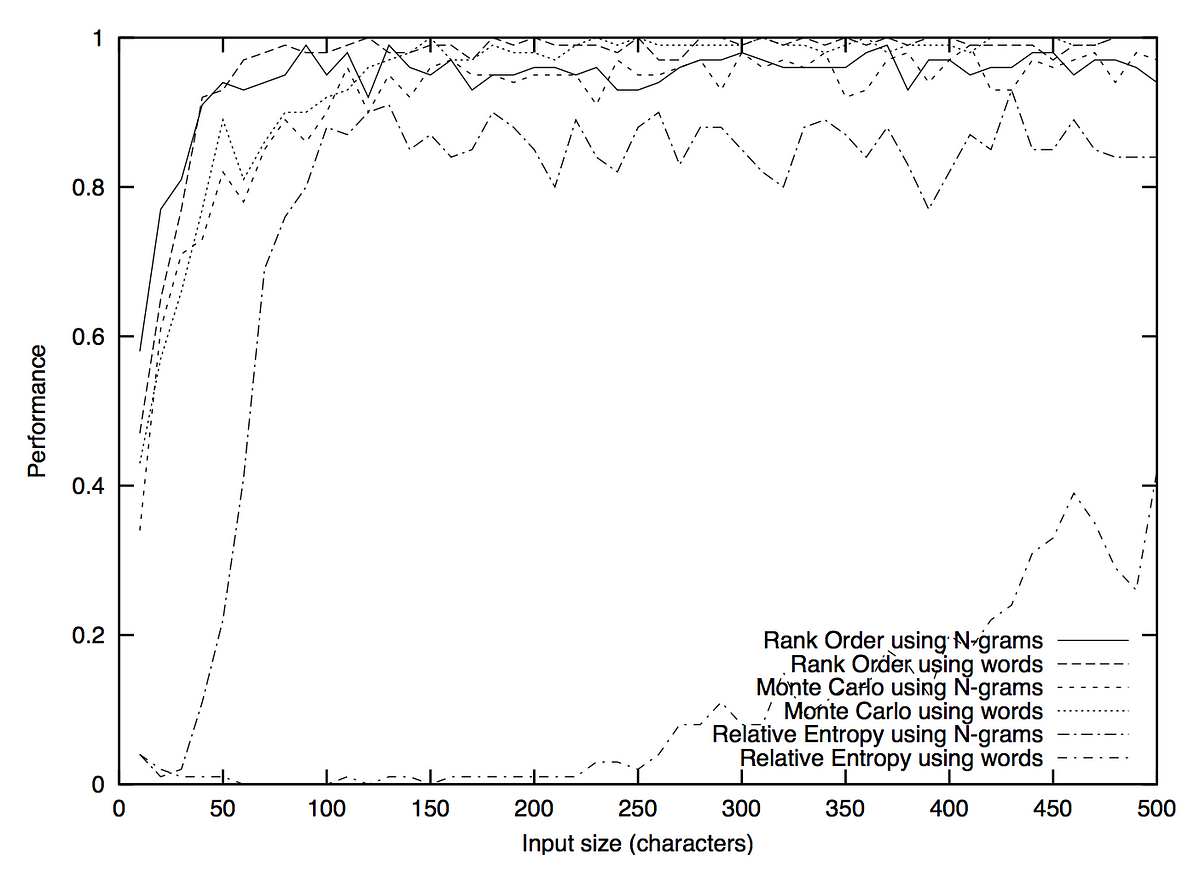

Apache Tika contains a language detector for 18 languages.Apache OpenNLP includes char n-gram based statistical detector and comes with a model that can distinguish 103 languages.Results of the DSL shared task are described in Zampieri et al. The best system reached performance of over 95% results (Goutte et al., 2014). In 2014 the DSL shared task has been organized providing a dataset (Tan et al., 2014) containing 13 different languages (and language varieties) in six language groups: Group A (Bosnian, Croatian, Serbian), Group B (Indonesian, Malaysian), Group C (Czech, Slovak), Group D (Brazilian Portuguese, European Portuguese), Group E (Peninsular Spanish, Argentine Spanish), Group F (American English, British English). Similar languages like Bulgarian and Macedonian or Indonesian and Malay present significant lexical and structural overlap, making it challenging for systems to discriminate between them. One of the great bottlenecks of language identification systems is to distinguish between closely related languages. This method can detect multiple languages in an unstructured piece of text and works robustly on short texts of only a few words: something that the n-gram approaches struggle with.Īn older statistical method by Grefenstette was based on the prevalence of certain function words (e.g., "the" in English).Ī common non-statistical intuitive approach (though highly uncertain) is to look for common letter combinations, or distinctive diacritics or punctuation. Also problematic for any approach are pieces of input text that are composed of several languages, as is common on the Web.įor a more recent method, see Řehůřek and Kolkus (2009). In that case, the method may return another, "most similar" language as its result. This approach can be problematic when the input text is in a language for which there is no model. The most likely language is the one with the model that is most similar to the model from the text needing to be identified. Then, for any piece of text needing to be identified, a similar model is made, and that model is compared to each stored language model. These models can be based on characters (Cavnar and Trenkle) or encoded bytes (Dunning) in the latter, language identification and character encoding detection are integrated. Mutual information based distance measure is essentially equivalent to more conventional model-based methods and is not generally considered to be either novel or better than simpler techniques.Īnother technique, as described by Cavnar and Trenkle (1994) and Dunning (1994) is to create a language n-gram model from a "training text" for each of the languages.

The same technique can also be used to empirically construct family trees of languages which closely correspond to the trees constructed using historical methods. This approach is known as mutual information based distance measure. One technique is to compare the compressibility of the text to the compressibility of texts in a set of known languages. There are several statistical approaches to language identification using different techniques to classify the data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed